Since I discovered that Nuke was concatenating Transform nodes for me, I liked to break out my transformations into multiple nodes to give me a bit more flexibility. I would do a stabilize, some keyframe animation, scale up a bit, rotate for a while, matchmove, re-scale, in different nodes. Nuke was smart about it, and because I knew how to not break concatenation, quality was never an issue. However, one cold dark afternoon in late 2013, I have been asked to provide a SINGLE transform node to the CG department (They had a way to add it to their 3D Camera). My move was built from a dozen node, I needed a way to reduce that to one.

The Bad Way

Giving up on the idea to calculate all manually, I set off to write a Python script to calculate a merged transform node for me, using the math that I knew: Trigonometry.

This resulted in an extremely heavy script, with some ridiculous calculations being done. Here is little snippet of just the math:

# Let's do our math: # I rotate a point (I arbitrarily chose the top right corner: width, height) around the A center point, by the rotation value of A. # The math to rotate a point is: # newX = cos(rotation) * (position.x - center.x) - sin(rotation) * (position.y - center.y) + center.x # newY = sin(rotation) * (position.x - center.x) + cos(rotation) * (position.y - center.y) + center.y # I also then multiply this by my scale coord_rotA_cenA_x = ( cos( radians( rotateA )) * ( width - centerA_x ) - sin( radians( rotateA )) * ( height - centerA_y ) + centerA_x ) * scaleA coord_rotA_cenA_y = ( sin( radians( rotateA )) * ( width - centerA_x ) + cos( radians( rotateA )) * ( height - centerA_y ) + centerA_y ) * scaleA # I rotate the same point (so again width/height) but this time around the center point of the second node. coord_rotA_cenB_x = ( cos( radians( rotateA )) * ( width - centerB_x ) - sin( radians( rotateA )) * ( height - centerB_y ) + centerB_x ) * scaleA coord_rotA_cenB_y = ( sin( radians( rotateA )) * ( width - centerB_x ) + cos( radians( rotateA )) * ( height - centerB_y ) + centerB_y ) * scaleA # now let's find the difference between these two (we want to cancel that difference via an offset later on) diff_coordA_x = coord_rotA_cenA_x - coord_rotA_cenB_x diff_coordA_y = coord_rotA_cenA_y - coord_rotA_cenB_y # the offset needed to match the transformations of A is the difference between our 2 rotations + the center * (scale-1) + translateA offsetA_x = diff_coordA_x + ( ( centerB_x - centerA_x ) * ( scaleA - 1 ) ) + translateA_x offsetA_y = diff_coordA_y + ( ( centerB_y - centerA_y ) * ( scaleA - 1 ) ) + translateA_y # Let's do the same with the transform values of B, but this time instead of using height and width, we need to use the new coordinates of that point: coord_rotB_cenB_x = ( cos( radians( rotateB )) * ( coord_rotA_cenA_x - centerB_x ) - sin( radians( rotateB )) * ( coord_rotA_cenA_y - centerB_y ) + centerB_x ) * scaleB coord_rotB_cenB_y = ( sin( radians( rotateB )) * ( coord_rotA_cenA_x - centerB_x ) + cos( radians( rotateB )) * ( coord_rotA_cenA_y - centerB_y ) + centerB_y ) * scaleB # Let's offset out center: centerN_x = centerB_x + offsetA_x centerN_y = centerB_y + offsetA_y # Same thing, but offsetting our offset center coord_rotB_cenN_x = ( cos( radians( rotateB )) * ( coord_rotA_cenA_x - centerN_x ) - sin( radians( rotateB )) * ( coord_rotA_cenA_y - centerN_y ) + centerN_x ) * scaleB coord_rotB_cenN_y = ( sin( radians( rotateB )) * ( coord_rotA_cenA_x - centerN_x ) + cos( radians( rotateB )) * ( coord_rotA_cenA_y - centerN_y ) + centerN_y ) * scaleB # difference between rotation center B and N diff_coordB_x = coord_rotB_cenB_x - coord_rotB_cenN_x diff_coordB_y = coord_rotB_cenB_y - coord_rotB_cenN_y # B offset offsetB_x = diff_coordB_x + ( offsetA_x * ( scaleB - 1 ) ) + translateB_x offsetB_y = diff_coordB_y + ( offsetA_y * ( scaleB - 1 ) ) + translateB_y # Now that we have calculated our variables, it's time to assign everything to the new Transform: # First calculate the actual values: new_translate_x = offsetA_x + offsetB_x new_translate_y = offsetA_y + offsetB_y new_rotate = rotateA + rotateB new_scale = scaleA * scaleB

I don’t know if it’s clear to you, but it’s not to me. Every time I re-read this code, I get a headache for a few minutes until I remember how it worked.

The full script was working, but flawed. It was quite slow to execute, would frequently crash Nuke when trying to execute on too many transforms at once, and would only work with translation, rotation and uniform scale.

It did the job though, and I surprised myself every few weeks since that day by having to use it again and again in different situations. The new problems arose when coworkers started to want to use that script. It had never been meant to be released, and even running it required a bit a python typing for the frame range.

The Better Way

Finally, a few weeks ago, I had enough. That script deserved a proper release. I went back to the drawing board, and decided to re-implement the script using a different method: Matrices.

I didn’t know how to use matrices in 2013, but having completed Khan Academy’s Matrix course a couple of months ago, I felt ready.

With matrices, the math above was greatly reduced. Here is the actual math in the new function, achieving the same as the previous snippet:

current_matrix = transform_matrix * current_matrix

Now that I can wrap my head around.

Using matrices had other advantages, like the fact that Nuke’s Transform node creates the matrix for you, you can access it directly in Python.

It also had challenges:

- I wanted to output a Transform node, not a Matrix, but there is no way to specify a transform’s matrix directly.

- I wanted to support CornerPin nodes, but while these calculate a matrix internally, they do not expose it via python. (Please join me in my feature request to the foundry)

- Transforms do not support perspective (cornerpin) transformations, so I needed a way to output a new CornerPin if necessary.

The first one was the hardest part. While trying to go back from a Matrix to Transform, Rotate, Scale, Skew, Center parameters, I found out that multiple combinations of these parameters could result in the same matrix, and that technically, rotation is a combination of scale and skew. I ended up finding what I needed, which is called a QR decomposition. The Wikipedia page of it seems very complete, but I couldn’t personally comprehend it sufficiently to make code out of it. However, as always, the Web is filled with people smarter than me, and this time I found a post by Frederic Wang which gave me the math a little bit more clearly. His algorithm was giving me almost the right result, and I had to do some trial and error changes to it to get a 1:1 matching result. I can now calculate every possible case, using Translate, Rotate, ScaleX, ScaleY, SkewX. The result might have different values in some fields than what you would get by calculating manually, but it’s all accurate. I’m basically solving an equation there, and to do so I had too many unknowns, so I manually set SkewY to 0.

The second point I thought would be easier (and it was) but proved a bit more challenging than expected. I had a solution in mind which didn’t work. I ended up recycling code from Ivan Busquets and Magno Borgo (who himself was adapting from Ivan, Pete O’Connell, and Myself). All these would only calculate the matrix from the 4 corners though, and ignore the extra matrix, or the inverse checkbox, so I added these. I tried for a bit to support the option to disable some corners but gave up since I never personally used these functions, and it was more work than I was willing to do.

For the third point, I could have easily stuffed my result matrix into the CornerPin’s extra_matrix (which is an option in my script) but I wanted to result in a proper editable cornerPin, so I made a function that projects the 4 corners through my result matrix and puts that in the ‘to’ knobs. (Oh, did I mention that the transform’s matrix knob is a Transform2d_Knob, while the CornerPin’s transform_matrix knob is an IArray_Knob, and that these guys work in very different ways? Well done The Foundry, well done..)

The rest was some cleaning up, making a little user interface for the functions, printing some cool little matrices while the code is running, adding progress bars, etc..

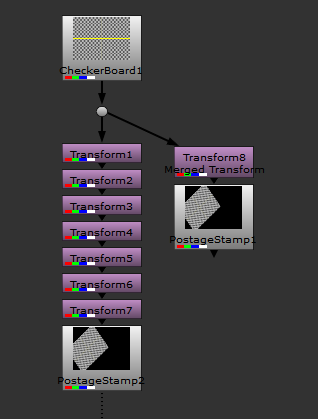

This is the result of running the script.

To download the full final script: Nukepedia

Edit: This tool has been reworked and is now part of a larger toolset for manipulating vectors and matrices: nuke-vector-matrix-toolset

Interesting !! But it’s a really good habit to separate transforms.

Sure, it’s great to separate transforms, but it also becomes annoying if you need to invert a whole group of transforms, or if you want to use the result of all the transforms directly in a roto node, or other node that has its own transform knobs.

Yes this is very handy when a track has to be offset or when two trackers are used to lock down a stabalize and then we need to invert or retime that data somehow. Inverting and or retiming multiple transforms is a pain and error prone.

So I downloaded this in the hope of merging my transforms while forgetting a key detail… they’re transformgeo’s… any way of doing that? It’s one transform with animation and the other is just a position offset transform (i think)

The same method can pretty much be applied as the transform Geo and the axis both have matrices as well. Nuke’s matrix4 object actually has methods for transforming back into translate rotate scale as well so it’s even easier. There are some examples on the nuke forum for that if you search for exporting cameras.

this is super super helpful. I had the idea but didn’t have enough math knowledge to do it lol. thanks, dude!

Do you think it’s possible to add ‘reformats’ to the math?

It’s possible, but probably not super straightforward. As far as I know reformats don’t expose their matrices, thought I never tried it out. Nuke does it behind the hood so mathematically it’s definitely possible.

I just played around with the idea to add ‘reformats’ to the mix.

It might be a bit tricky though, because the node not only does a rescaling (in connection with rotation and a position change if you switch ‘center’ off or ‘turn’ on), but also changes the underlying format. And if that involves a change in aspect ratio, all following nodes are affected.

My initial idea was to translate the operations done by the reformat to a transform node and just have a check in the end of the script, if the format changes and add a neutral (resize to none) reformat node to apply that. That way, you might have two nodes in the end, but it basically does the trick – until you have an aspect-ratio change…

There are some tools in Nukepedia to translate cornerpins and transforms to another format (just fresh v2 of eSmartReformat by Giovanni Ermes Vincenti). So perhaps one can connect all those tricks together to a working version. But it won’t be “just” calculating matrices any more.

Still, your tool already helped me multiple times, so thank you a lot for it!

It shouldn’t be too hard to implement, reformats can also be thought of as a matrix.

I’d probably do all the transforms and reformats as matrices without actually thinking of the format, and then at the end add a reformat to match the end format, with the resize mode set to None and no recenter.

Just saw this comment again, I’ve added reformat support a few months ago, essentially working the way you mentioned here.

I saved one of my artist’s 4hours of work that would have been needed to redo in Moccha thanks to your work.

It’s a very well documented hard work, thank you Erwan!

Great Tool, thanks Erwan!

Great tool! There is one little bug, i can’t find how to get around.

After i convert multiple transforms/cornerpins(with reference frame = no transform, on a frame 1000) into 1 cornerpin – if i change reference frame lets say from 1000 to 1100 – then my track starts to wobble. And the more change in reference frame – the more wobble i get.

Did i miss something?

I change reference frame just by copying “to” into “from” and removing animation.

I also tried same with matrix, but it was the same